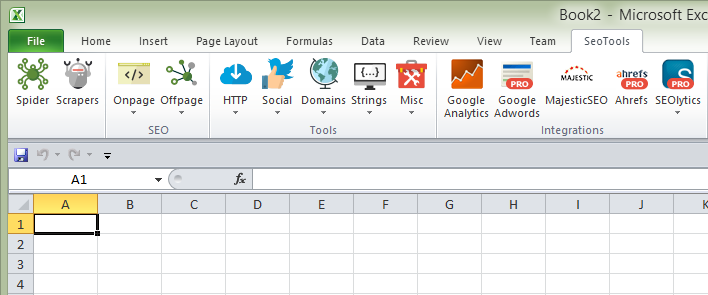

SeoTools 4.3 – Scrapers, Ahrefs and Adwords

Since it took me two years to release the last version of SeoTools, I wanted to release the next one faster. This one is pumped with new features!

TL;DR

This release is awesome!

Download latest version of SeoTools without delay!

Scrapers

In 4.3, I’m introducing a concept that I call Scrapers. Scrapers are written using a new XML format that allows you to easily extend SeoTools. A scrapers can given a set of parameters, fetch a web page, locate a particular part of the page and then parse a return value.

Scrapers can be used in formulas and in the SeoTools Spider.

The following scrapers are currently shipped with SeoTools 4.3:

- Alexa.LinkCount

- Alexa.Popularity

- Alexa.Reach

- Bing.Index

- Delicious.Shares

- Dmoz.Entries

- Facebook.Likes

- Google.AnalyticsId

- Google.Index

- Google.Links

- Google.PlusOne

- Google.Results

- Klout

- LinkedIn.Shares

- Pintered.Pinned

- Stumbleupon.Likes

- Stumbleupon.Stumbles

- Twitter.Tweets

- Wikipedia.Links

- Yahoo.Directory

- Yahoo.Index

Creating your own scraper is quite simple. You can see how the included scrapers are built in the /scrapers/ directory. There will be a follow up post about this so stay tuned.

Let me know what scrapers you need. Just post in the SeoTools forum and I’ll help you out. If you create something cool please share with the rest of us!

Breaking change: The following functions have been removed and replaced by scrapers: GoogleIndexCount, TwitterCount, FacebookLikes, AlexaReach, AlexaPopularity, AlexaLinkCount, GoogleLinkCount, GoogleResultCount, DmozEntries, WikipediaLinks.

Ahrefs integration

I’m really excited for this new Ahrefs integration. To use the Ahrefs integration you need a SeoTools Pro license and an Ahrefs account.

Features:

- Ahrefs rank

- Anchors

- Backlinks

- Domain rating

- Metrics

- Pages

Google Adwords integration

Finally the long-awaited Google Adwords integration is here! To use the integration you need a SeoTools Pro license and a Google Adwords API key.

Features:

- Adwords Query Language (AWQL)

- Campaigns

- Keywords

- Keyword search volumes

- Keyword ideas

AWQL is really cool. Using AWQL, you can pretty much query any data from your Adwords account. If you’re a serious Adwords pro, you need to learn AWQL!

Other notable improvements

- Inserting values from wizards is now super fast! Also fixed a large performance issue with Dump(). This will make a big difference in SeoTools experience.

- MajesticSEO Updates:

- Added Skip parameter to Anchor texts, Backlinks and New&Lost Backlinks.

- Added new commands Top pages, Topics and Search by keyword.

- You can now enter “unlimited” urls in Index data.

- New icons!

- Updated how delays between requests are managed. In HttpSetting you can write:

<IntervalBetweenRequests RandomFrom="1000" RandomTo="1500" IfSame="Host"/>This will ensure that requests to the same host will be executed with a delay of random ms between 1000 and 1500. IfSame can be "Host" (default), "Domain" or "Url". This is used in some scrapers as a strategy to not get blocked too quickly. - Added a default user agent in HttpSettings as some sites returns a http error when none is set. Request are made to look like Chrome 39 on Windows 8.1.

- Fixed some Spider issues with GooglePageSpeed and GooglePageRank columns. Some other stability improvements. *Updated available metrics and dimensions in Google Analytics Core Reporting. Also treating all dimensions as strings.

- You can now fetch more than 1000 rows (if you have Pro) using Analytics formulas.

- Saving SeoTools.config.xml in AppData directory if failing to save SeoTools dir. If SeoTools.config.xml is not found in SeoTools installation path one is created.

- Added some stats to the Spider progress windows.

- Fixed problem in Spider when creating report and there’s no active workbook.

- HttpStatus and UnshortUrl functions now uses global HttpSettings. HttpStatus column uses HttpSettings from wizard if defined otherwise global HttpSettings. This required when scraping mobile sites where you need to set the User-Agent.

- HttpStatus column in the Spider now has an option to show the “final” http response status code or the “first”.

- Updated HtmlAgilityPack to solve stackoverflow exceptions on certain pages, improving Spider stability.

- Google PageSpeed can now query mobile results.

- Fixed bug with backlinks in SEOlytics not working.

Download latest version of SeoTools

Thanks for being the best community one could have! : ) SeoTools is built on all the great suggestions by growth hackers from all over the world.

/Niels

PS. If you like my work please consider purchasing a Pro license.

Comments